First there was EII (Enterprise Information Integration) otherwise known as data federation. Data federation technologies allow one central data store (virtual or physical) to link to one or more remote data sources. The central store allows the consuming application to query both the data held in the central store and linked data from remote data sources, through a single connection. On receiving a query, the central datastore’s query engine issues sub-queries to the remote data stores in order to retrieve the data from them, then it combines the returned results before presenting them to the user.

So what’s the difference between data federation and data virtualization? Well according to some definitions I found on the web, not a lot, however this is not the case. Rick Van Der Lans in his BeyeNetwork article “Clearly Defining Data Virtualization, Data Federation and Data Integration” does a good job of pointing out the differences between data virtualization and data federation. However, for me it’s about evolution.

Data federation as a concept is indeed a cornerstone of data virtualization. Data virtualization provides abstraction of data sources, allowing the federation of distributed data from a single place, combining data from sources in real time and presenting it back to the calling application in response to their query request. However, data virtualization has evolved and grown from this core principle, surrounding it with more enterprise scalable features that allow ‘federation’ to be applied in many use cases across a business. Data virtualization provides a more agile data integration approach that helps satisfy the incessant appetite of businesses for consistent and accessible data, the data needed to power competitiveness in an increasingly digitised world.

Technologies focusing on data federation are lagging behind. Major database and warehouse appliance vendors provide their customers with bolt-ons for example, that facilitate the federation of their own data stores with other databases. This allows ‘linked tables’ to be defined in their systems that provide access to data in remote databases. However, their vision is limited to their own products and not on providing an enterprise capability.

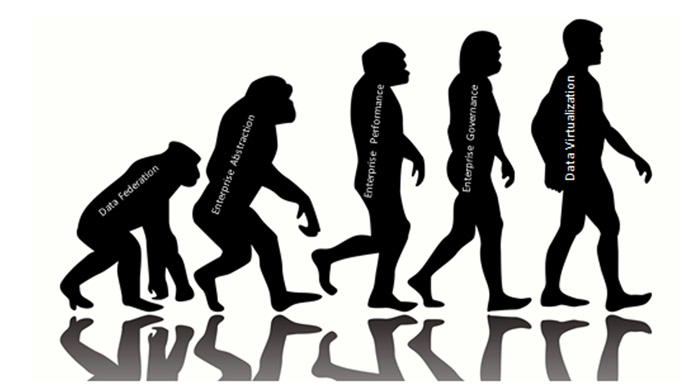

Data Federation’s Enterprise Evolution

Much as man has learned to use and reuse more sophisticated tools, to stand upright and run faster and to understand the world around him, data virtualization has broadly added three strategic capabilities to data federation: enterprise abstraction, enterprise performance and enterprise governance.

Enterprise Abstraction

While data federation supports one off requirements to combine data, what if there is wider abstraction strategy? Federating data via another data store can just create another data silo. Data virtualization technologies provide open access to a logical repository of data that doesn’t lock businesses into any dependent physical data store or appliance.

Is the data needed to be re-used across the enterprise? Data federation technologies provide capabilities to combine data from linked data stores, but published models are focused very much on the physical structure of the data. Data virtualization provides intuitive modelling tools to enable the publication of reuseable logical data entity views, abstracting the physical implementation and providing centralised access to conformed data. These entity views can be published simply via many protocols (as SQL queryable objects and Web Services) in a codeless manner providing greater reuse and productivity.

In order to provide enterprise grade abstraction, the breadth of data sources needed to be accessed can be a challenge. Where there may be a simple set of data sources to integrate initially what about in the future? Data federation technologies typically are limited to integrating relational data stores, whereas data virtualization expands connectivity agnostically to include any flavour of RDBMS, data appliance, NoSQL, Web Services, SaaS and enterprise applications for example.

Enterprise Performance

Is the performance optimisation maturity of data federation tools enterprise class? Data virtualization vendors focus on providing optimisations specifically designed for combining data across multiple systems. These optimisations leverage the underlying processing power of the data sources in the most efficient manner and includes sophisticated dynamic query optimisation and caching features. Data federation optimisation features provided by data store vendors in contrast, are relatively new in the market and lack the maturity of optimisations provided by data virtualization vendors, relying on the processing power of the data store itself to handle query load.

Enterprise Governance

Data federation tools lie firmly in the technical domain of the database administrator. Data virtualization now enables consumers to understand and discover the data published in the logical model via simple to use interfaces. Through browser based self-service tooling, data virtualization metadata is exposed that allows users to understand the lineage of the data from published model to data source, and also to query, search and browse data. This enables users to discover relevant information published in the virtual model that can then be consumed without the need for specialist technical knowledge.

In addition, data virtualization has evolved a robust and flexible security model that enables data to be published across the enterprise securely. Data virtualization implements a consistent security model across heterogeneous data sources that may have differing security levels and implementations. For example, in logical warehousing scenarios data may need to be combined from spreadsheets, Hadoop, warehouse appliance and cloud applications, all with different security models; the published data however can be accessed with via a common interface with consistent security and masking rules applied to the data no matter where it originates.

Data Virtualization Continues to Evolve

The evolution of data virtualization like that of Man, won’t just stop here. Vendors will continue to push the boundaries of data virtualization to support ever advancing enterprise use cases and demands of the enterprise for abstraction, performance and governance.

In this blog I’ve tried to articulate my thoughts on how data virtualization has surpassing data federation as an enterprise scalable integration capability. I’d welcome your thoughts in contrast, so feel free to add comments below.

- Data Virtualization: Thinking Outside the Bowl - July 31, 2019

- 3 Pillars of GDPR Compliance - May 9, 2018

- 3 Steps to Data Protection Compliance (GDPR) - May 9, 2017